http://www.intellectualhistory.net/mill/index.html

I was delighted to see this project to generate an “electronic resource” of James Mill’s common place books, and also enjoyed the quaint phrasing of calling it an “electronic resource,” as in, “James Mill’s Common Place Books— Now with electricity!”

I have had a fondness for commonplace books as a way to organize information and ideas as they come up, which is handy for people like me who frequently want to capture thoughts. In my experience, they utilize an organizing principle of having an index at the front in which you enter a subject (often through an alphabetized list of each consonant followed by all the vowels:

B—

A-

E-

I-

O-

U-

C—

A-

E-

I-

O-

U-

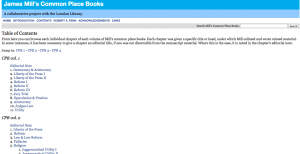

When you have an idea, you its topic in the appropriate place, then simply insert the page number upon which you write things down. In this way, the book can be read chronologically, but also be organized by subject. It seems that Mills did not organize his books this way, however, and so one of the accomplishments of the project was to organize the information.

This project of James Mill’s commonplace books is appreciable in its user-friendliness and transparency of process. There is a clear user’s guide that orients the reader to use, clarifying the structure of the electronic object, but also providing insight to the structure of the original artifact, serving as a pleasant reminder that what the screen shows references a separate object that also exists out of sight. The introduction provides interesting context to why this artifact was chosen to “electronicize.” Of particular interest to me is that one can view the “editing principles” used to translate the book into its electronic form. The object in question is founded in a transcription of the Mill’s books by Prof. Robert Fenn. The editing principles reminds us that its electronic form could have been different, had it been transcribed by someone else, or had different design principles been engaged.

In addition to accessing the contents of the original books, enhanced through the organization of material into topics, this object invites readers to participate in an ongoing organizing scheme through the creation of tags. I value this, again, because it serves to overcome the limitations of the initial coding scheme.

The interface is rather simple and unimpressive, but also unencumbered and easy to use, which may be helpful to those overwhelmed by too many “intuitive” buttons. Overall, this project is a very interesting case of how to extend the life and relevance of a textual object that is necessarily bounded by materiality and temporality (its chronology of creation). This, on top of the interesting purpose and structure of the original medium, serves as a sort of double look into how we can organize ideas, personally and collectively.